As CEO and co-founder of Voquent, I firmly believe in upholding our company’s values and principles.

We always strive to promote responsible business practices and engage in essential debates, such as the implications of AI in voiceover.

Over the years, we have taken significant steps to protect voice actors and address their concerns about AI utilization. However, with the rise of ChatGPT and the growing interest in AI voiceovers and voice cloning, it is necessary to formalize our position on this matter.

Voquent will not support, accept, or participate in any project or job requests involving the creation or use of AI voice models, regardless of their intended purpose.

Here’s my reasoning behind this decision.

The Impact of the AI Voices

The release of ChatGPT in November 2022 captivated the world. The development of AI has accelerated consumer-level utilisation of algorithmic plagiarism on an unprecedented scale; thousands of startups claim AI will replace swathes of traditional human services. These companies seek to capitalise on the hype, AI voiceover and voice cloning tech are part of this movement.

AI voice clones are built on training models. The more audio uploaded, the more realistic and indistinguishable the voice clone sound. Waiting for AI voices to advance before dealing with the objective threat that accompanies this technology simply proliferates the risks; dealing with this problem sooner, rather than later, is crucial.

Now to be clear: AI voiceovers and synthetic speech still do not offer a professionally competitive alternative to a human, custom-recorded voiceover. We are, however, very concerned about the accessibility of voice clone building apps being offered as cheap and readily accessible toys to consumers on a wholly unregulated basis.

Unknowable Damage of Unregulated Tech

Our discomfort revolves around the same principles as why certain types of products or tools are only purchasable with a license. Music is a prime example.

Napster was the first music file-sharing platform to prove that as many as 80 million people were willing to share or even steal music with no sense of guilt purely because “the platform existed and everyone was doing it”. Individual consumers are not legally obliged to adopt or practice moral principles. This consideration is why I do not believe AI voice cloning tools should be accessible at a consumer level.

Contrary to the statements made by other notable providers and platforms in the voiceover industry, we disagree that unconstrained and unregulated mass use of AI voice clones is a certainty. Voice cloning represents a severe erosion of fundamental human rights, which is unacceptable collateral damage in pursuing disruptive software development.

Identity Theft and Fraud through AI Voices

This technology represents a dangerous threat to everyone’s privacy, security and well-being, especially if you’re in the public eye, e.g. a content creator, actor or politician. Any consumer seeking to create a voice clone can easily use audiobooks, YouTube videos or interview archives to build a powerful AI voice clone for any desired purpose.

A recent headline example was Martin Lewis, who hosts a popular Money Saving TV series in the UK:

Designed for Deception

Effective communication is based on two fundamental principles:

- HOW a message is conveyed, and

- WHO is listening to or consuming the message.

Our brains are instinctively preconditioned to tune out what isn’t human, artificial voices are detectable within seconds on mainstream mediums such as streaming, TV, video games, internet portals or radio; they aren’t fit for purpose in long form, high-quality content.

However, a well-modelled AI voice clone over a poor-quality audio device (such as a phone) can take much longer to detect, and the cognitive capability of the listener also plays an important role. For instance, it would be effortless to let an AI voice clone have an entire conversation with a young child or elderly relative without necessarily realizing they are speaking with a clone.

Therefore, the duration before detection, as in the number of seconds before the average listener realizes something is wrong, becomes the most critical metric for a company seeking to sell AI voice clones and generative voiceovers. Their challenge is to try to minimise tell-tale signs that can extend that duration for as long as possible:

- Stilted or unnatural delivery

- Unusual intonations

- Lack of variation in pitch

- Repetitive accentuations

- Emotional tone that doesn’t match the content

- Occasional glitches

Delivery and authenticity are essential, and as soon as a listener suspects that they are listening to an AI voice, their trust in the speaker evaporates, and the perceived value of the message or content plummets. This is why for mainstream audience consumption, where a voiceover typically extends beyond 15 seconds, AI voice clones cannot compete with a professionally viable alternative to a customized or live human voice.

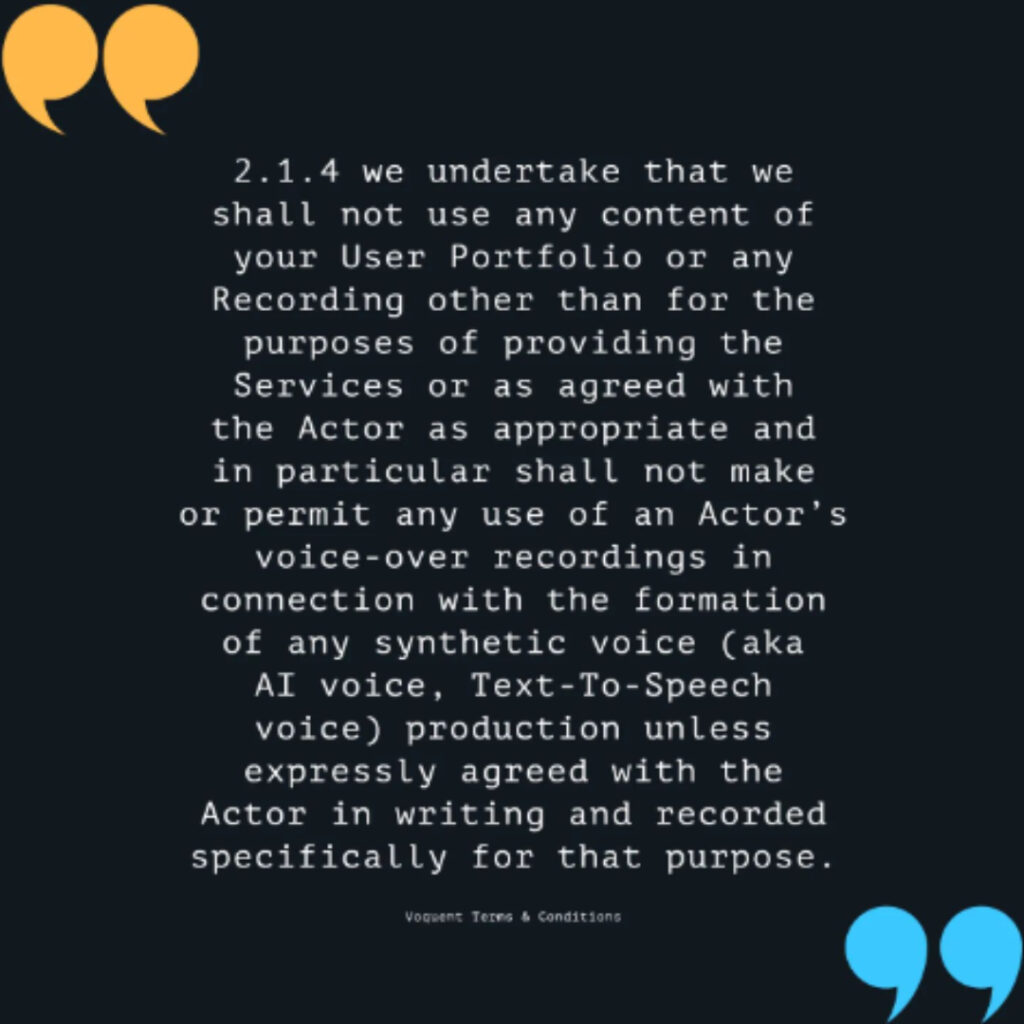

Prioritizing Safety

Last year, Voquent became the first significant voiceover provider to clarify its terms of use, alleviating voice actors’ fears regarding their audio demos, auditions or material being used to train AI or create synthetic voices. Our terms explicitly state that we will only use audio for generative AI models with the complete clarity and consent of all parties involved.

But the issues surrounding the enablement of AI voice cloning are not isolated to performing talents. We believe that AI identity cloning of any kind (visual or vocal) represents an equal threat to the lives and rights of ordinary people.

Current voice cloning technology represents one of the most powerful new tools for supporting deep fake impersonation (fake, harmful media built with deep learning AI) and misuse that has ever existed. Scammers, phishers, hackers and cybercriminals currently enjoy unconstrained access to and use of these tools, and this requires a level of proactive legislation that governments are not accustomed to.

Imagine your voice being used to trick your family to transfer you emergency funds in a scam; consider the potential for your voice being used to trick your children’s teachers in a school. The possibilities for exploitation are chilling, especially as voice cloning becomes more advanced.

A Bad Apple…

Everyone knows that big tech companies are willing to sacrifice the privacy and security of the broader public for profit as long as they can operate in a legally defensible grey area. Unfortunately, the concept of ethics and accountability in large corporations usually represent little more than a PR issue and an unwelcome distraction.

For instance, The Whitehouse had all the big tech companies sign an agreement ensuring safe, secure and trustworthy AI. There was one notable omission – Apple. Apple has spent years claiming to be a champion of user privacy which makes its actions particularly puzzling.

Now, Apple appears to be preparing self-voice cloning as a consumer function on iPhones and have been showcasing the functionality on their forthcoming iOS 17 release, as reported here and here.

Tech companies that offer realistic-sounding, off-the-shelf TTS offerings have productised the identities of professional voice actors. Unsurprisingly, the volume and frequency of legal disputes are increasing; Greg Marston represents the latest professional voice actor to fall foul of big tech reselling a clone of his voice.

There was widespread outcry before Apple backtracked on their intended AI for audiobooks option; This shows that people can force change if we are vigilant and ready to stand up.

The Need for Consent

It is naive and irresponsible to leave this form of technology purely to self-regulation. We strongly believe that any company or person that seeks to use any form of vocal recording of a human being, modified, adapted, edited or otherwise, must have explicit consent from that individual to use an AI clone of their voice.

In particular, that consent should be for the exact words used and the mediums from which the audio will be transmitted, broadcast, delivered or consumed.

If the primary purpose of the use is to endorse, advocate or encourage sales for commercial gain, that license should be explicit. Explicit about the mediums, territory, intended targeted audience or recipient and the duration the recording can be used. Any company or individual that uses a voice clone without permission of the original individual from which the clone was based is performing a level of identity theft and committing an act of fraud.

Private companies seeking to sell off-the-shelf TTS/ realistic sounding Voice Clones should be subject to local non-compliance penalties against the same audit standards as finance and insurance companies.

Fraud is and always has been a criminal act. Therefore, individuals that use voice clones to commit fraud, whether for their personal use or on behalf of a group or organization, must always be traceable and accountable at an individual and personally liable level.

Technology uses are outpacing data privacy laws

Governments are reactive entities, prioritizing initiatives linked to voter sentiment.

For this reason, updating data privacy or copyright laws to keep up with aggressive, privately funded technology developments is also mainly viewed as a PR consideration for incumbent lawmakers. In many cases, the act of using an AI voice clone is arguably breaking multiple existing laws:

- Non-consensual depictions and endorsements

- False representation and impersonation

- Plagiarism and unlicensed use of creative copyrights

- Fraud, abuse and identity theft

- Infringements on human rights

Government regulation will eventually seek to control AI voice cloning. And tech companies wanting to sell AI know this, so they seek to exploit the open market as widely and quickly as possible before introducing these controls.

Conclusion

Amidst the safety concerns, it is easy to forget that voice cloning can offer genuine humanitarian benefits. People born with or affected by medical conditions such as ALS benefit massively from TTS and synthetic voiceover, it’s always worth championing the positive potential that any technology can have for specialised usage. Still, the sad reality is that if we keep supporting this work, we’re accelerating the power and accessibility of tools subject to widespread misuse.

At Voquent, we are committed to responsible business practices and protecting the rights and safety of voice actors and the public. The potential benefits of voice cloning must be clear of the risks.

For the foreseeable future, until we see explicit, recognizable intervention and regulation that will tightly control the uses and enablement of AI voice clones, we are no longer comfortable or willing to facilitate any projects that will support generative synthetic speech, even if it’s for good causes. From a human rights standpoint, there needs to be much greater awareness of the misuse of AI voice clones, and the criminal implications of their current or potential abuse can only be addressed by governments. The sooner, the better.

We hope that other individuals and companies in and outside our industry will give equal interest and consideration to the risks and dangers of unregulated and non-consensual formation and use of voice clones.

Sometimes we include links to online retail stores such as Amazon. As an Amazon Associate, if you click on a link and make a

purchase, we may receive a small commission at no additional cost to you.